Hot Right Now

A Giant Energy Machine? 5 Incredible characteristics of the Bosnian Pyramid

YouTube Video Here: https://www.youtube.com/embed/H1r8kxbzEqQ?feature=oembed&enablejsapi=1

From the presence of a mysterious underground energy beam with a radius of s 4.5 meters and a frequency of 28 kHz with a strength of 3,9 V, to ultrasound Beams at the Pyramids of the Sun, here we have 5 incredible characteristics of the Bosnian Pyramid which...

The Ant People legend of the Hopi Native Americans and connections to the Anunnaki

YouTube Video Here: https://www.youtube.com/embed/LprarIdJGsw?start=78&feature=oembed

The more you look at ancient texts and stories from around the world, you can't help but see surprising patterns. Some are so glaring that it takes real effort to ignore them, but that's what many people do. One example is from the Hopi Native America...

Experts claim there are 3 hostile Alien species visiting Earth

YouTube Video Here: https://www.youtube.com/embed/FbBc_LmXkbQ?feature=oembed&enablejsapi=1

Misinterpreted throughout the centuries as 'Gods', here are three of the most hostile Aliens that according to 'experts' have been visiting Earth since time immemorial.

The idea that we are not the only life forms in the universe has captured the imagination and interest of not only...

“My battery is low and it’s getting dark”: What one of NASA’s rovers taught...

Mars is one of the planets in our solar system that humans know most about, and for good reason. The "Red Planet" has been lauded by scientists because of its similarity to Earth. The similarity convinces many that humans may be able to fashion a second home out of it....

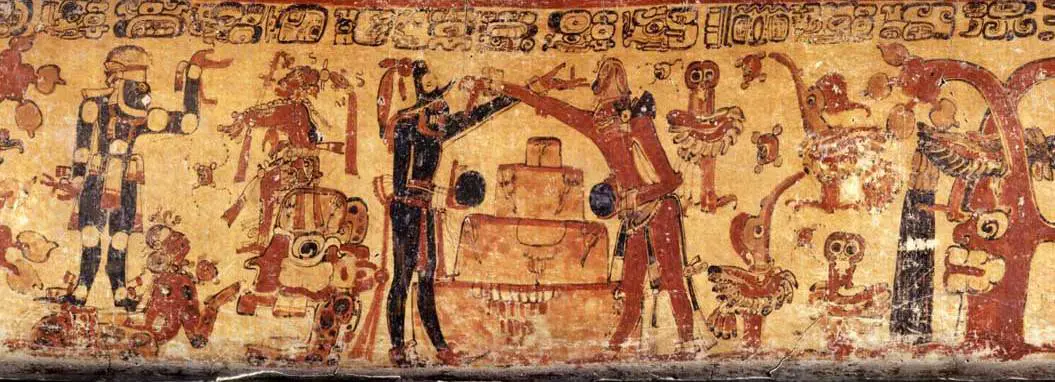

Popol Vuh – The sacred book of the Ancient Maya: Other Beings Created Mankind

These beings who created mankind are referred to in the Popol Vuh as “the Creator, the Former, the Dominator, the Feathered-Serpent, they-who-engender, they-who-give-being, hovered over the water as a dawning light.”

What does this mean? When you think about it, the ancients were telling how “they” possibly referred to as...

How did the last Woolly Mammoths die out on this Russian island near Alaska?

YouTube Video Here: https://www.youtube.com/embed/a2744xoqRKo?feature=oembed&enablejsapi=1

When we think of woolly mammoths, we think of creatures that walked the Earth tens of thousands of years ago before the entire species became extinct due to the warming of the planet. But a new study shows that not all of them perished this early, and...

Trending

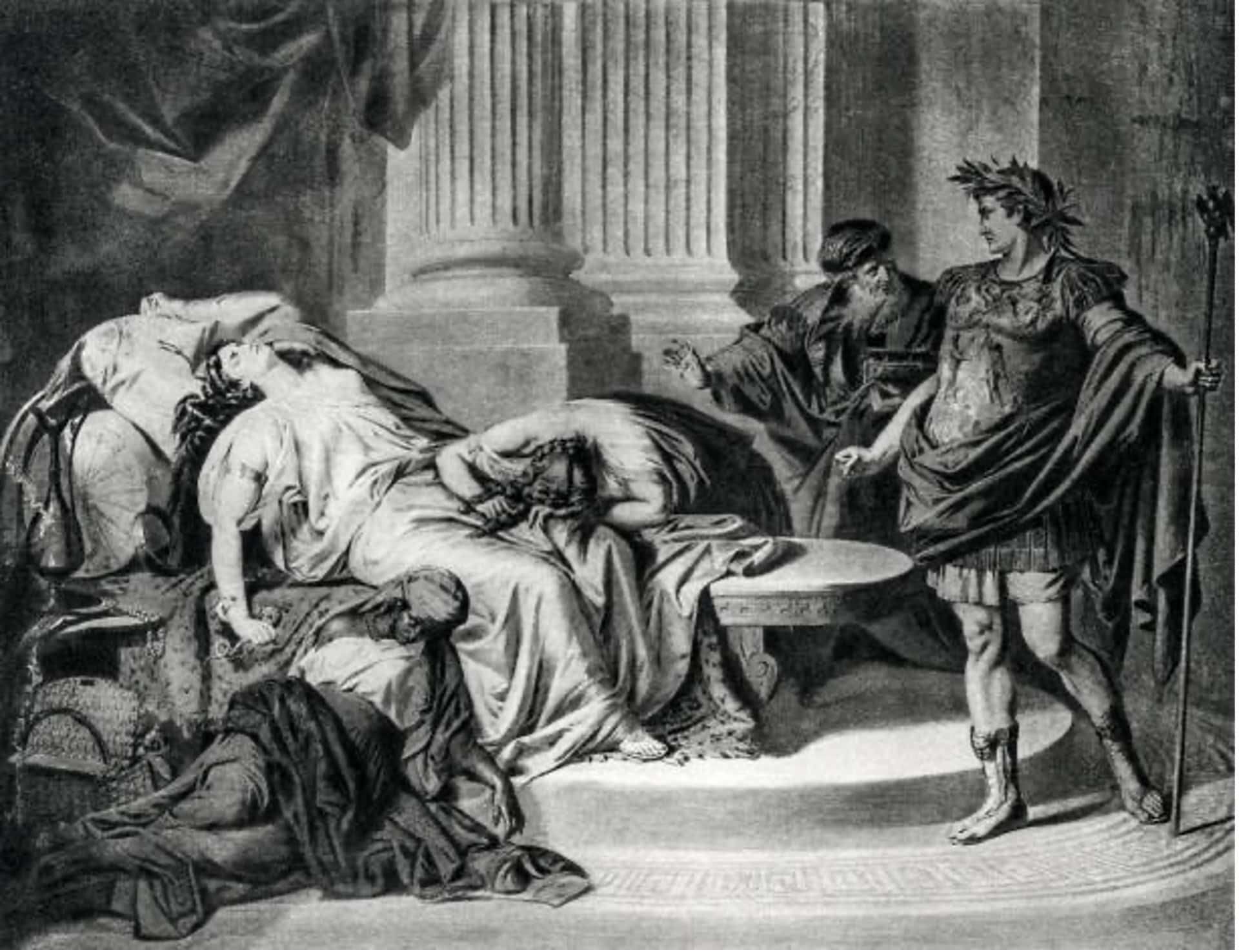

cleopatra

How did Cleopatra die?

Cleopatra VII the queen of Egypt and one of the most famous women in history. Cleopatra rules over the prosperous Egyptian empire. She is beautiful, intelligent, and a master of manipulation. Every man she meets falls in love with her, including Julius Caesar and Mark Antony.

Over time, Cleopatra's ambitions...

Who was Cleopatra

When we hear the name Cleopatra, we think of a beautiful and alluring woman with a tragic story. But who was she? Cleopatra was the last active pharaoh of Ptolemaic Egypt and briefly survived as pharaoh by her son Caesarion. After her reign, Egypt became a province of the...

What did cleopatra look like?

Cleopatra, the Queen of Egypt, was one of the most renowned figures of her time. She was a beautiful, intelligent, and charismatic personality that used her power and influence to shape the course of history. However, her appearance and looks are still a mystery to the world as there...

News

LATEST ARTICLES

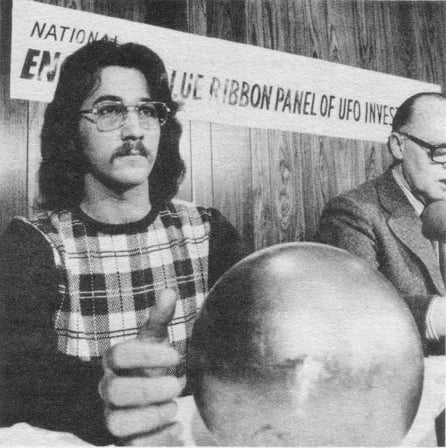

Decades after its discovery, the Betz Sphere remains a scientific mystery

The so-called 'Alien sphere' was discovered in 1974. After several tests, experts concluded that the object was a magnetic sphere sensitive to magnetic fields, numerous sound emissions, and mechanical stimulation. The sphere was able to withstand a pressure of 120,000 pounds per square inch, concluding that it was composed out...

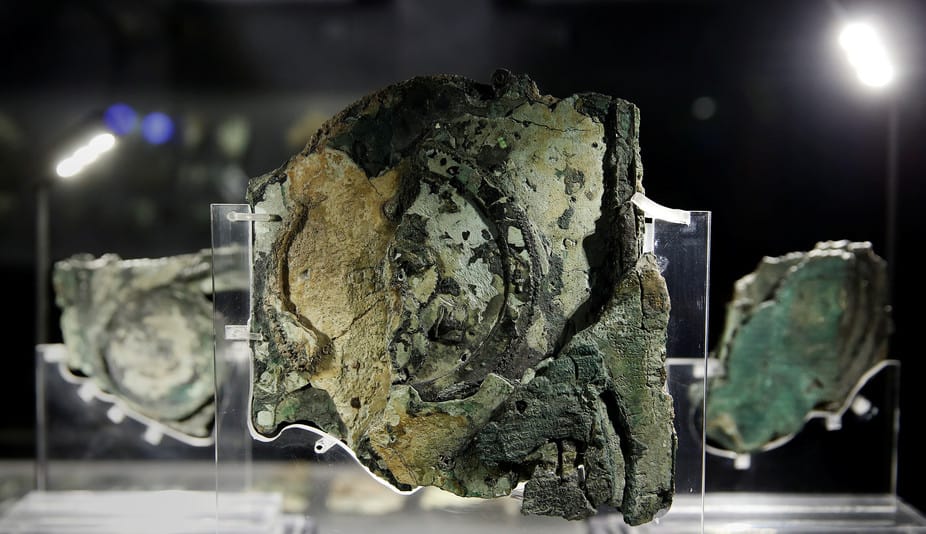

10 fascinating facts about the Antikythera Mechanism: A ‘computer’ created over 2000 years ago

The Antikythera mechanism is considered the FIRST analog computer in history. Researchers have still no idea who built it, what it was exactly used for, when it was built and where.

Discovered in 1900 among the remains of a shipwreck, and regarded as the first computer made by humans, the Antikythera mechanism remained...

Eery coincidence? The constant of speed of light equals the coordinates of the Great...

Is it just an eerie coincidence that the speed of Light equals the coordinates of the Great Pyramid of Giza? The speed of light in a vacuum is 299, 792, 458 meters per second, and the geographic coordinate for the Great Pyramid of Giza is 29.9792458°N. Some say this is...

5 Mind-boggling mysteries about the Universe that science cannot explain

Why is it that the universe is expanding faster and faster? Are there other universes out there? What if black holes are in fact doorways to other worlds? Why is the universe filled with invisible matter? And why is gravity –one of the weaker fundamental forces— the only force...

The Great Pyramid of Giza consists of a staggering 2.3 million blocks

Not only does the Great Pyramid of Giza consist of around 2.3 million blocks transported from quarries located all over Egypt, it is estimated that 5.5 million tons of limestone, 8000 tons of granite and around 500,000 tons of mortar were used thousands of years ago to build this...

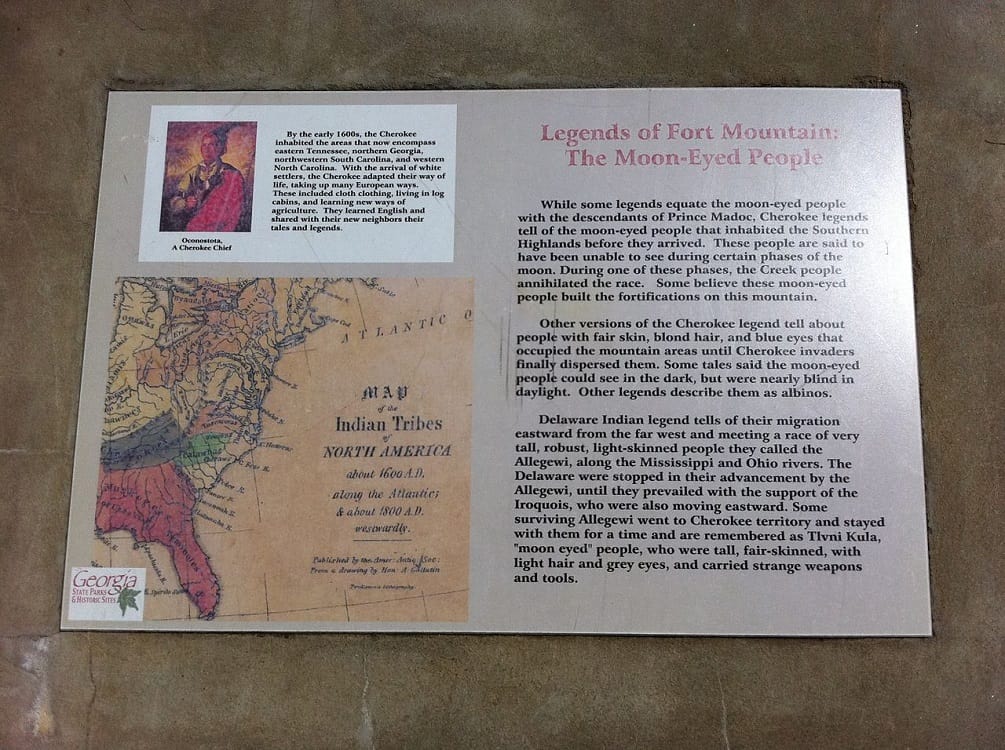

The mystery behind the 18 Giant skeletons found in Wisconsin

Their heights ranged between 7.6ft and 10 feet and their skulls “presumably those of men, are much larger than the heads of any race which inhabit America to-day.” They tend to have a double row of teeth, 6 fingers, 6 toes and like humans came in different races. The...

Tesla’s Time Travel Experiment: I could see the past, present and future all at...

Apparently, Tesla too was obsessed with time travel. He worked on a time machine and reportedly succeeded, saying: ‘I could see the past, present, and future all at the same time.'

The idea that humans are able to travel in time has captured the imagination of millions around the globe....

The astonishing link between Sacred Geometry and the Universe

Have you ever thought about ‘Sacred Geometry’ and the mysterious connection to everything that surrounds us? As it turns out there are numerous mysteries that seem to connect mother nature and sacred geometry.

Sacred geometry proposes that it is possible to find in the universe, certain geometric patterns which are...

Do these NASA images reveal traces of ‘walled cities’ on Mars?

YouTube Video Here: https://www.youtube.com/embed/Uf7dA71IAhc?feature=oembed&enablejsapi=1

According to UFO Hunters, there is conclusive evidence that an ancient civilization may have existed on the surface of Mars in the distant past. AS proof, ufo hunters point towards 'shocking' images taken on Mars showing what appears numerous structures and walled cities.

Located near Elysium Planitia...

Another massive ‘alien’ base found on the moon?

YouTube Video Here: https://www.youtube.com/embed/WhfupcX8GPU?feature=oembed&enablejsapi=1

NASA's Lunar Reconnaissance Orbiter (LRO) was able to capture another strange object on the surface of Earth's natural satellite. According to UFO hunters, it yet another anomalous structure which defies explanation.

According to researchers, the objects visible in the images are of a peculiar and striking geometry,...